|

Getting your Trinity Audio player ready...

|

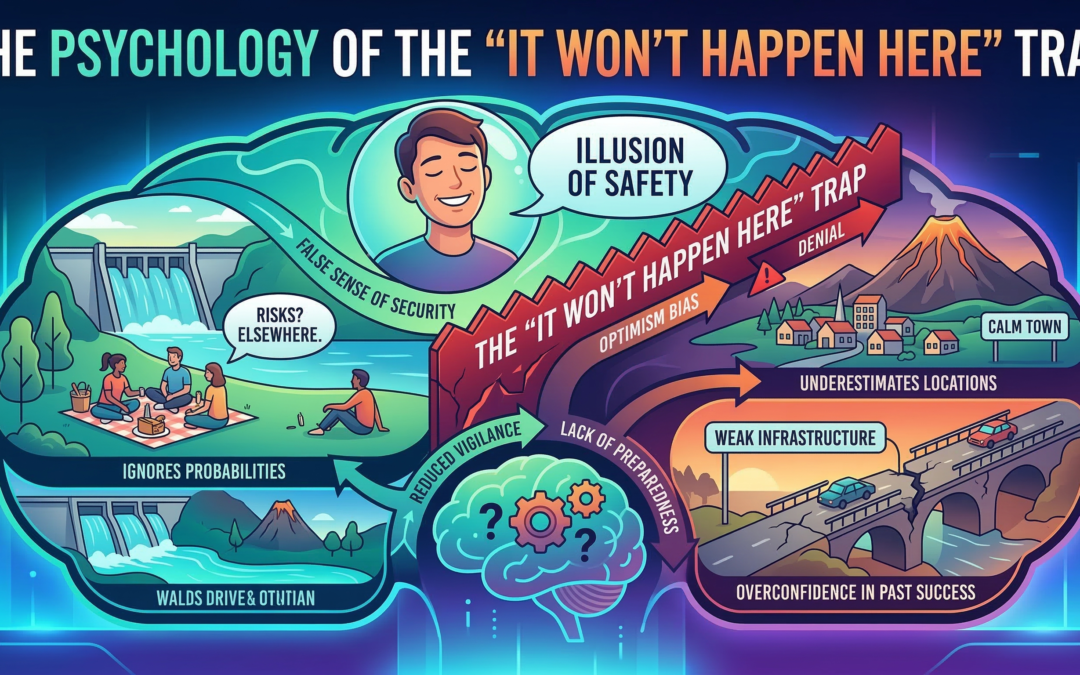

The Psychology of the “It Won’t Happen Here” Trap

There is a particular kind of confidence that lives in people who have never experienced a serious disaster. It is not arrogance, exactly. It is something quieter and more stubborn — a background assumption that the bad things happening elsewhere will continue to happen elsewhere. Researchers have a name for it: optimism bias. And it is one of the most reliably dangerous mental habits a person can have.

The Brain’s Comfort Math

When we hear about a flood in another country, a wildfire destroying a town three states over, or a grid failure paralyzing a city we’ve never visited, our brains perform a quiet calculation. We note the distance. We note the differences between there and here. We conclude, without much conscious effort, that the gap between their situation and ours is protective.

This is not irrational on its face. Distance and context do matter. But the brain doesn’t stop at reasonable inference — it goes further. It inflates the differences and deflates the similarities. It treats the gap as a guarantee rather than a variable. The result is a person who is genuinely, sincerely convinced that their city, their neighborhood, their household is sitting in a kind of permanent exception.

Psychologists studying this pattern have found it across cultures and income levels. It is not a failure of intelligence. Some of the most analytically sharp people hold this belief most firmly, because they are better at constructing arguments for why the exception applies to them.

Normalcy Bias: The Freeze Beneath the Surface

Closely related to optimism bias is what researchers call normalcy bias — the tendency to underestimate both the likelihood and the severity of a disaster because nothing like it has happened before in your experience.

Normalcy bias is why people in the path of a Category 5 hurricane stay home. It is why workers in the World Trade Center went back to their desks after the first plane hit, waiting for an announcement telling them what to do. It is why entire towns have watched floodwaters rise slowly and chosen not to move, because the water had never come this far before.

The brain uses the past as a map for the future. When the future contains something that has no precedent in your personal history, the brain struggles to model it accurately. It defaults to the nearest familiar pattern and treats the situation as less severe than the evidence suggests. This is not a bug in human cognition — it was adaptive for most of human history, when genuine novelty was rare. But in a world with cascading infrastructure, extreme weather, and complex supply chains, it quietly gets people killed.

Why Intelligent People Are Not Immune

There is a common assumption that education and critical thinking inoculate a person against these biases. The research consistently says otherwise. In some cases, higher analytical ability actually increases a person’s capacity to rationalize away warning signs, because they can build more sophisticated justifications for staying put.

This phenomenon — sometimes called “smart person’s paralysis” in disaster psychology literature — shows up repeatedly in post-disaster interviews. Survivors frequently describe their pre-event reasoning as airtight. They had thought through the scenarios. They had weighed the probabilities. They had concluded that the risk was being overstated, that authorities were being overcautious, that the historical record did not support the alarm.

The problem was not that they lacked information. The problem was that they applied their intelligence to confirming a conclusion they had already emotionally committed to — that this was not their crisis to manage.

The Role of Social Proof

Human beings are profoundly social in their risk assessment. We do not evaluate danger purely on objective information. We evaluate it in part by watching what people around us are doing.

When a warning is issued and most of your neighbors stay home, that behavior registers as data. If the people who live here, who know this place, are not leaving — then maybe it is not as serious as the alert suggests. This is not foolishness. In ordinary life, reading social cues is a highly reliable shortcut. The problem is that in a genuine emergency, social cues are often wrong, because everyone is looking at everyone else and making the same calculation simultaneously.

This dynamic helps explain how communities can be almost unanimously wrong in the face of a real threat. Nobody panicked, so nobody moved, so nobody survived to correct the assumption.

What Actually Shifts the Pattern

The research on what changes these patterns is humbling. Information campaigns, on their own, do not work particularly well. Telling people that disasters happen, that they could happen here, that the statistics support concern — this moves the needle less than anyone would hope.

What does work, to a meaningful degree, is prior personal experience. People who have lived through a serious emergency — even a relatively minor one — carry that experience as a reference point that overrides abstract reasoning. They do not need to imagine what it feels like because they already know.

The second thing that works is what researchers call “protective action” framing — giving people something specific and manageable to do. Vague threat awareness tends to produce anxiety and inaction. Specific, achievable preparation tends to produce engagement. The brain resists helplessness. When preparation is framed as a series of small, doable steps rather than a confrontation with terrifying possibilities, people are significantly more likely to act.

The third is community. When preparation is normalized in a social group — when neighbors talk about it, when it is not treated as paranoia or eccentricity — individuals within that group are far more likely to take it seriously. Social proof cuts both ways.

What This Means Practically

None of this requires believing that catastrophe is imminent. The point is not to live in a state of elevated fear. The point is to notice when your confidence that “it won’t happen here” is not actually based on evidence — when it is a feeling dressed up as analysis.

The question worth sitting with is simple: if a serious disruption did happen in your area — extended power failure, supply chain collapse, civil unrest, extreme weather — what would your first 48 hours actually look like? Not theoretically. Practically. What do you have, what do you lack, and who would you call?

Most people find that question uncomfortable not because the answer is hopeless, but because they have never thought it through. That discomfort is information. It is the optimism bias noticing that it has been caught.